Self-Evolving Skills

in Multi-Agent Systems

Skills became the npm of agentic AI in eight weeks. This is a survey of that explosion — and what an optimal architecture looks like.

"Skills are fragile, tools are expensive, and agents forget everything"

The old approach was brute-force: stuff every tool schema and instruction into the system prompt. This worked with 5 tools and 3 turns. It collapses at 50-200 tools running for hours across multiple agents. Four problems compound:

Schema overhead devours context

Connecting three typical MCP servers (GitHub, Slack, Sentry) consumes ~94,000 tokens of schema overhead — 47% of a 200K window — before any conversation begins. The RAG-MCP benchmark shows tool selection accuracy drops from 84–95% at 49 tools to 0–20% at 741 tools.[1]

Hand-written skills drift and decay

Static instructions degrade as codebases evolve. Without a feedback loop, the agent has no mechanism to distinguish helpful instructions from harmful ones. SkillsBench found self-generated skills provide ≈0pp net benefit — the model can't reliably author its own procedural knowledge without iteration.[2]

Every session starts from scratch

An agent that solves a complex debugging pattern on Monday has zero memory of it on Tuesday. Multi-session tasks see quality collapse: MemoryArena shows models "plummet to 40–60%" on interdependent multi-session problems. Long context is not memory.

More skills → worse performance

There exists a phase transition in skill selection at critical library size. Semantic confusability — not library size — is the dominant degradation driver. Adding skills past the cliff hurts the agent. SkillRouter found a 31–44pp accuracy drop when skill text is truncated.[3]

Skills aren't tool-calling wrappers anymore. They're the primary unit of reusable agent knowledge — versioned, composable, self-evolving. The story is convergence: three labs independently arrived at the same format, the same progressive disclosure, the same evolution loop.

"An open standard with no standards body"

Anthropic, OpenAI, and Google independently converged on the same skill format. No joint announcement. No standards body. The agentskills.io open standard defines a SKILL.md file — YAML frontmatter, Markdown body, git-native — now adopted by 30+ agent tools.[4]

① SKILL.md

The authored format

Name, description (routing metadata — not docs), workflow steps, input schema, output format. The description is the single most important field: it's what the router matches against. Negative examples reduce misfires by 20%.[5]

~100 tokens at discovery · up to 5K on activation

② PROGRESSIVE DISCLOSURE

Load only what you need

The runtime pattern that makes skills scalable. Advertise ~100 tokens per skill at boot (name + description). Load the full SKILL.md body only on activation. Fetch scripts and references on demand. Claude Code reduced tool context from 14,000 tokens to 968 — a 94% reduction.

HOT → WARM → COLD · 3-tier disclosure

③ EVOLUTION LOOP

Execute → reflect → mutate → validate

The closed-loop pattern that appears independently in at least 6 research groups. The skill library isn't static — it's a living artifact that improves with every agent interaction. EvoSkills achieves 71.1% pass rate vs. 53.5% for human-curated skills.[6]

self-evolving · no weight changes

Skills are not MCP. MCP connects one agent to many tools. Skills are an agent-to-agent capability layer — many agents, shared registry. Complementary, not competing.

# SKILL.md specimen with evolution metadata

---

name: api-conventions

description: >

REST API design conventions for this project.

Activate when creating or modifying API endpoints.

NOT for GraphQL. NOT for internal RPC calls. # ← negative examples reduce misfires 20%

allowed-tools: Bash(npm run test:api *) Read Grep

metadata:

freyja:

type: build

triggers: [api endpoint, REST, route handler]

confidence: verified # ← lifecycle: unvalidated → experimental → verified → deprecated

retrieval_count: 23

success_signals: 19 # ← passive evolutionary selection pressure

failure_signals: 2

cold_tools: [custom_api_validator] # ← 3rd-tier progressive disclosure

---

# API Conventions

[Step-by-step instructions follow...]

How a skill goes from idea to production to garbage

A skill enters the system, proves itself (or doesn't), accumulates trust (or decays), and eventually gets retired. The retired skill's execution traces feed back into the system as raw material for the next generation. This loop is the difference between a skill library that compounds over time and one that silently rots.

① How skills enter the system

Every skill we surveyed entered through one of four pathways. The mix matters — systems that rely solely on human authoring plateau fast; the ones that also mine traces and evolve from failures compound.

Human-authored

A developer writes a SKILL.md by hand. Still the dominant pathway for seed skills — Memento started with 5 hand-crafted seeds and grew to 235 autonomously.[M1]

Extracted from traces

ProcMEM distils raw trajectories into (Activation, Execution, Termination) tuples — 26× compression: 102 tokens per skill vs. 2,675 tokens per trajectory. 92.5% in-domain reuse rate.[M2]

Mined from repositories

Code modules are converted to SKILL.md via dense retrieval + cross-encoder reranking. Repository mining automates the human-authoring bottleneck at scale.[M3]

Evolved from failures

Memento-Skills: when utility drops below threshold δ after nmin invocations, the system triggers DiscoverSkill or OptimiseSkill — failure is the mother of invention.[M1]

Not every trace becomes a skill. The gate logic, reconstructed from ProcMEM and AutoRefine:

Trace received │ ├─ One-off pattern? ──────────────────── → discard │ ├─ Recurring pattern detected? │ │ │ ├─ Complex / stateful? ──────────── → Subagent pattern (AutoRefine) │ │ │ └─ Procedural / deterministic? ──── → Skill candidate │ │ │ ├─ PPO-Gate: better than → reject (ProcMEM trust-region │ │ existing baseline? verification) │ │ │ └─ Similar skill exists? │ ├─ yes ────────────────── → MERGE (version bump) │ └─ no ─────────────────── → NEW (mint v0.1.0)

② How skills earn trust

A new skill is unproven. It enters a maturity ladder gated by statistical evidence, not time — each rung requires passing increasingly strict evaluation thresholds. PSN formalises this with a maturity-aware update probability:

P(update | σ) = 1 − sigmoid(V(σ) / threshold) + ε_min

As V(σ) accumulates, the skill's update probability decays toward ε_min — a small floor that keeps even verified skills open to rare corrections. This is the mechanism that turns a noisy candidate library into a stable, high-confidence production library. Without it, every skill is permanently volatile.

Raw candidate, no evidence

Just crystallised from a trace or authored by hand. Update probability ≈ 1.0. Every invocation result feeds back into the skill's body. High churn is expected — most skills die here.

Early positive signal

Passed initial unit-test gates (Memento-Skills) or achieved positive Q-value (ProcMEM). Update probability ~0.6. The skill begins receiving real traffic via exploration routing.

Statistically trusted

EMA Q-value exceeds confidence threshold over a sustained window. Update probability drops below 0.15. The skill is now "frozen" — invoked frequently, modified rarely. Refactoring (PSN structural rewrites) may still apply.

Flagged for retirement

Base model passes the skill's eval without it (model absorption), or utility drops below δ. The skill enters a grace period — still callable, but no longer routed to by default. One more failure triggers full retirement.

③ Where skills live: the memory stack

Skills don't exist in isolation — they sit at the top of a three-layer memory architecture. PlugMem (MSR/UIUC) formalises the pattern every successful system builds: episodic memory stores raw execution traces, semantic memory distils those into indexed facts, and procedural memory compresses the facts into reusable skills. Each layer is a compression step. Skipping layers produces brittle, overfit skills.

Episodic memory is raw substrate, not a product. Skip the semantic denoising step and skills overfit to specific execution traces. ProcMEM: 26× compression ratio — ~100 tokens per skill vs. ~2,675 per trajectory. That compression is the point.

④ When to kill a skill

A skill library that only grows will eventually drown the agent. AutoRefine measured this directly: without active pruning, repositories grow 4.5× while utilisation degrades 8.9×. Stale skills don't just waste tokens — they cause misfires when the router selects a nearly-correct skill instead of the actually-correct one. Three signals tell you it's time to cut:

Score-based pruning

ProcMEM fires when online Q-value ≤ 0 or a duplicate is detected. Binary and immediate — the skill is deleted, not archived. Appropriate for skills that were never validated past the experimental stage.[M2]

Utility-threshold redesign

Memento: when U < δ after nmin invocations, the system doesn't delete — it escalates to DiscoverSkill, treating low-utility as a signal the skill boundary was wrong. Redesign, not deletion.[M1]

Model obsolescence

The subtlest death: the base model absorbs the skill's knowledge. SkillReducer audited 55,315 skills — 10.7% already obsolete. The test: disable, re-run. If the pass rate holds, retire it.[M4]

Why the skill exists determines when to kill it

Meta AI's taxonomy — the reason a skill was created predicts its shelf life:

PERFORMANCE-GAP

Compensates for a model weakness. Shortest lifespan. Dies when the model improves. Example: a skill teaching JSON output formatting — unnecessary once the model handles structured output natively.

PREFERENCE-ENCODING

Encodes user/org-specific taste. Medium lifespan. Persists until preferences change. Example: "always use British spelling" — model-agnostic, but user-mutable.

EVAL-ANCHORED

Pinned to a specific evaluation benchmark. Longest lifespan. Dies only when the eval is retired. Example: a skill tuned to pass a compliance audit — survives model upgrades.

OpenClaw and LangChain run a background process that periodically replays traces, re-evaluates utility, and triggers retirement or reinforcement — no user interaction needed. This is the mechanism that keeps a library clean without manual curation. If a skill can't justify its existence when the system audits it in the background, it gets pruned.

What every major lab is shipping

Every major lab shipped skill primitives in the same 90-day window. The convergence is the signal.

Skills in ChatGPT + Codex Plugins (Feb–Mar)

Skills as a first-class primitive. Three archetypes: process (workflows), tool-based (API wrappers), convention (coding standards). The critical insight: skill descriptions are routing metadata, not documentation. Tell the system when to invoke, not how. Negative examples ("NOT for GraphQL") reduce misfires by 20%.[5]

Open-Source Maintenance with Skills (Mar 9)

An 8-skill SDK catalog achieved +45% PR throughput. Description quality IS the routing bottleneck — not instruction quality.[7]

Codex Plugins (Mar 26)

Skills evolved into Plugins — the installable distribution unit. SKILL.md → Plugin mirrors module → npm package.

Sources: openai.com · developers.openai.com

Measuring Agent Autonomy (Feb 18)

Autonomous work time doubled in 3 months (25→45 min at p99.9). Agents ask for clarification 2× more — a feature, not a bug.[8]

Harness Design for Long-Running Apps (Mar 24)

Generator+Evaluator loop with sprint contracts. Key finding: "context anxiety" (Sonnet 4.5) vs. full compaction (Opus 4.6) — different models handle skill-loaded contexts differently. Skill eviction follows the same sigmoid as context eviction: early, not late.

2026 Agentic Coding Trends Report

Three of eight trends directly amplify skills: single agents → teams, long-running agents (days/weeks), oversight via AI review. Production: 5× productivity at Rakuten, 13K solutions at TELUS.[9]

Closing the Knowledge Gap with Agent Skills (Mar 25)

A single gemini-api-dev skill: 6.8% → ~100% accuracy on Gemini SDK tasks. The most dramatic skill-impact number in the literature. Caveat: weaker models get diminishing returns from the same skill.[10]

Gemma 4: Agentic Skills at the Edge (Apr 2)

On-device skill execution: four categories in <3s, <1.5GB RAM. Skills decouple capabilities from model size — a 3B with the right skills outperforms a 70B without.

Scaling Agent Systems (Google Research, Jan 28)

When do multi-agent setups help? +80.9% on parallelisable tasks. −39% to −70% on sequential ones. 87% accuracy predicting optimal architecture from task features.[11]

Ranking Engineer Agent / REA (Mar 17)

Production-deployed at Meta. Hibernate-and-Wake: the agent sleeps between tasks but wakes with skills intact. 2× accuracy, 5× productivity. Not a benchmark — a production deployment serving real engineers.[12]

KernelEvolve (Apr 2)

Self-evolving kernel optimization. MCTS + evolutionary search over skill combinations. 60% throughput on NVIDIA GPUs, 25% on MTIA. Not code templates — optimization strategies discovered autonomously.[13]

HyperAgents (Mar 24)

Metacognitive self-modification. The agent doesn't just evolve skills; it evolves the evolution process itself. Outperforms across 4 domains. Terrifying and compelling in equal measure.[14]

PlugMem: Procedural Memory as Primary Reuse Unit (Mar 10)

MSR's key insight: procedural memory should be the primary reuse unit, not episodic or factual. PlugMem stores skills in a knowledge graph as first-class nodes — connected to the facts they need and the contexts they fit.[15]

CORPGEN: Multi-Horizon Task Environments (Feb 26)

12–46 simultaneous tasks, 3.5× baseline completion. File-output eval: 90% accuracy vs. 40% for screenshots. Skills need verifiable outputs to enable evolution.[16]

Microsoft Agent Framework v1.0 (Apr 3)

Skills as first-class SDK primitives via SkillsProvider. YAML declarative agents with A2A + MCP integration. The framework makes skills a native platform concept, not a library feature — every agent built on MAF has skill support by default.

Agent Toolkit + OpenShell (GTC 2026, Mar 16)

"Open Skills" as a platform layer. Self-evolving Claws that write their own code. OpenShell provides out-of-process policy enforcement — skills execute sandboxed, a separate watcher kills policy violations before they affect the host.

Nemotron 3 Family (Mar 24)

The model-as-skill paradigm. Configurable thinking budget: 1K tokens → basic skills, 32K → deep reasoning. RL across 10+ environments ensures transfer.

MAIW Blueprint (Jan 9)

6 role-specialized agents, MCP shared tool layer, GPU-accelerated skill execution. First reference architecture treating skill latency as a first-class constraint.

How skills evolved from static files to living organisms

Six eras, each building on the last. Diagrams on the left, story on the right.

Hand-authored, never updated

Skills were Markdown files written by humans and loaded verbatim into agent context. No feedback loop, no quality signal, no evolution. The agent consumed them passively. SkillsBench confirmed the failure mode: self-generated skills without iteration provide ≈0pp net benefit — sometimes actively harmful.

The fundamental limit: without a fitness signal, there's no selection pressure. Bad skills persist forever alongside good ones.

self-generated ≈ 0pp benefit without iterationLoad only what you need, when you need it

The runtime breakthrough that made skill libraries scalable. Claude Code's adoption of defer_loading: true reduced system tool context from ~14,000 to 968 tokens. Our measurements: progressive disclosure reduces per-session cost from $3.00 to $0.32 for a 100-tool system across a 20-turn session.

The three-hop discovery path: query → skill description → load_skill(name) → full body + tools. Promotion is sticky within a session — once loaded, a tool stays visible.

Execute → fail → reflect → mutate → validate

The dominant research pattern of Q1 2026, appearing independently in at least 6 groups: EvoSkills[6], SkillRL[17], MetaClaw[18], SAGE[19], AutoSkill[20], and ProcMEM[21]. No weight changes — just textual mutation and validation.

EvoSkills co-evolves a Skill Generator + Surrogate Verifier with no ground truth needed. 71.1% pass rate vs. 53.5% human-curated, 30.6% baseline. The evolved skills beat humans.

evolved skills > human-curatedThe right skill at the right time

The 2026 shift: from embedding similarity to RL-trained behavioural routers. SkillRouter[3] achieves 74% Hit@1 over ~80K skills with a 1.2B full-text retrieve-and-rerank pipeline — 13× fewer params, 5.8× faster than baselines. SkillOrchestra[22] adds performance-cost trade-off routing: +22.5% over SOTA with 700× less learning cost than Router-R1.

Memento-Skills[23] proved it decisively: RL-trained routing achieves 80% task success vs. 50% for BM25, with GAIA +13.7pp and HLE doubled (38.7% vs 17.9%).

80% vs 50% task success (RL vs BM25)Atomic → functional → strategic

SkillX[24] auto-constructs a 3-tier hierarchy from execution trajectories: atomic skills (single operations), functional skills (composed workflows), strategic skills (high-level plans). SkillCraft[25] demonstrates up to 80% token reduction through compositional skill caching and reuse.

ARISE[26] adds hierarchical reward co-evolution: a Skills Manager builds and retrieves from a tiered library while a Worker agent generates responses. The two co-evolve their reward signals. Outperforms GRPO-family methods on 7 benchmarks including Omni-MATH.

80% token reduction via skill reuseThe agent evolves how it evolves

HyperAgents[14] introduced metacognitive self-modification: the meta-level improvement procedure is itself editable. This is recursive self-improvement applied to skill management. The agent doesn't just optimise its skills — it optimises the process by which it optimises its skills.

Constitutional Evolution[27] used LLM-driven genetic programming to discover behavioural norms across multi-agent societies. Evolved constitutions beat human-designed ones by +123% in societal stability with 98.6% less communication.

recursive self-improvement — no formal safety guarantees50+ papers in 90 days, organized by theme

The Feb–April 2026 window produced an extraordinary density of skill-related research. We tracked 50+ papers across 8 parallel investigation threads. These are the ones that matter.

| Paper | Date | Key Technique | Results |

|---|---|---|---|

| EvoSkills | Apr 2 | Skill Generator + Surrogate Verifier co-evolve; no ground truth needed | 71.1% pass vs 53.5% human-curated[6] |

| SkillRL | Feb 9 | SkillBank (hierarchical distillation) + adaptive retrieval + recursive co-evolution with RL policy | +15.3% over baselines[17] |

| MetaClaw | Mar 17 | LLM evolver (zero downtime) + Opportunistic Policy Optimization (LoRA RL in idle windows) | 21.4% → 40.6% accuracy[18] |

| SAGE (Amazon) | Mar 10 | RL-based (GRPO) Sequential Rollout chains; skill-integrated reward signal | +8.9% completion, 59% fewer tokens[19] |

| AutoSkill | Mar 1 | Lifelong learning from dialogue traces; dynamic injection without retraining; model-agnostic | Cross-user, cross-agent skill transfer[20] |

| Memento-Skills | Mar 19 | Agent-designing-agent; RL-trained skill router; unit-test gate prevents regression | GAIA +13.7pp; HLE doubled[23] |

| CODE-SHARP | Feb 10 | Foundation Models expand/refine hierarchical skill archive (directed graph of reward programs) | >134% avg improvement[28] |

| HyperAgents | Mar 24 | Metacognitive self-modification — meta-level procedure itself is editable | Outperforms DGM on 4 domains[14] |

| EvoSkill (Sentient) | Mar 3 | 3-agent loop (Executor + Proposer + Skill-Builder); Pareto frontier selection | OfficeQA +7.3%, SealQA +12.1%[29] |

| Paper | Date | Key Technique | Results |

|---|---|---|---|

| SkillRouter | Mar 23 | 1.2B full-text retrieve-and-rerank over ~80K skills; progressive disclosure matters (31–44pp drop without) | 74.0% Hit@1; 13× fewer params[3] |

| SkillOrchestra | Feb 23 | Skill-aware orchestration; fine-grained learning from execution experience; cost-performance routing | +22.5% over SOTA; 700× less learning cost[22] |

| SkillFlow | Mar 27 | 4-stage retrieval pipeline (dense → rerank×2 → LLM selection) over 36K SKILL.md files | Pass@1 78.3% improvement[30] |

| SkillClaw | Apr 9 | Cross-user collective evolution via Agentic Evolver; shared skill repo synced across users | WildClawBench improvements[31] |

| Paper | Date | Key Technique | Results |

|---|---|---|---|

| SkillX | Apr 6 | 3-tier hierarchy (strategic → functional → atomic) auto-constructed from trajectories | Iterative refinement + exploratory expansion[24] |

| EffiSkill | Mar 29 | Operator Skills (concrete) + Meta Skills (strategic) mined from slow/fast program pairs | Execution-free optimization[32] |

| ScienceClaw+Infinite | Mar 15 | 300+ interoperable scientific skills; plannerless coordination via artifact broadcasting | Pressure-based scoring[33] |

| AutoRefine | Jan 30 | Dual-form Experience Patterns: specialized subagents + skill guidelines; continuous scoring | ALFWorld 98.4%, TravelPlanner +15pp[34] |

| PSN | Jan 7 | Programmatic Skill Networks — executable symbolic programs forming composable evolving networks | MineDojo + Crafter[35] |

| Paper | Date | Key Technique | Results |

|---|---|---|---|

| From MAS to Single-Agent | Apr 2 | Metric Freedom (F) predictor for skill distillation utility; 2-stage adaptive distillation | +28% lift; 8× cost reduction[36] |

| SkillMOO | Apr | NSGA-II multi-objective evolutionary optimization over skill bundles | +131% pass rate, −32% cost[37] |

| AgentCPM | Feb 6 | Atomic skill RL as distinct training phase before holistic pipeline RL | 8B model matches closed-source[38] |

| Agent Primitives | Feb 3 | 3 reusable latent sub-skills (Review, Vote, Plan+Execute) compose complex MAS | No hand-crafting needed[39] |

| Paper | Date | Key Technique | Results |

|---|---|---|---|

| Constitutional Evolution | Feb 3 | LLM-driven genetic programming discovers behavioural norms; multi-island evolution | +123% stability, 98.6% less comm.[27] |

| OMAR | Feb 4 | Single model plays all roles; hierarchical advantage estimation; emergent social skills | Emergent empathy, persuasion[40] |

| ABSTRAL | Mar 24 | MAS architecture as evolving NL document; contrastive trace analysis discovers specialist roles | Discovers absent roles[41] |

| ProcMEM | Feb 2 | Skill-MDP formalization; Non-Parametric PPO for skill generation + verification | Cross-agent transfer[21] |

| Benchmark | Date | Key Finding |

|---|---|---|

| SkillsBench | Feb 13 | 86 tasks, 11 domains, 7,308 trajectories. Curated skills: +16.2pp. Self-generated: ≈0pp net benefit. Smaller models + skills match larger models without.[2] |

| SkillCraft | Feb 28 | Benchmark for compositional skill formation + caching + reuse. Up to 80% token reduction from skill reuse.[25] |

| MetaClaw-Bench | Mar 17 | Evaluates fast adaptation for evolving agent workloads. MetaClaw: 21.4% → 40.6% accuracy.[18] |

| Agent Skills Empirical | Feb 8 | 40,285 marketplace skills audited. 46.3% duplicates. 9% critical-risk (L3). 18.5× growth in 20 days.[42] |

| ADeLe (Nature, MSR) | Apr 1 | 18-ability profiling model. 88% accuracy predicting performance on unseen tasks. Principled vocabulary for agent skills.[43] |

Skills aren't standalone — they form networks

Skills are nodes in an interaction graph. Value comes from composition — how they chain, branch, loop, and contradict. The hard problem isn't writing skills; it's making them compose reliably.

PSN's Programmatic Skill Networks

Programmatic Skill Networks (PSN) is the deepest treatment of composition we found.[PSN] Skills are symbolic programs — not prompt snippets — with typed preconditions and postconditions forming a directed acyclic graph. Composition happens through explicit invocation links:

f → g → h

Skill f calls g, then h. Each postcondition satisfies the next precondition. The simplest composition — and the most common.

if precond: A else B

Runtime branching based on precondition checks. Enables context-sensitive behaviour without separate skills for each branch.

until postcond(σ)

Repeated invocation until a postcondition is satisfied. Used for iterative refinement — polish a draft, retry a flaky API, converge on a threshold.

f ∥ g — absent

No parallel composition in the current PSN spec. This is the obvious next frontier — and the hardest, because it requires conflict resolution at the postcondition level.

The planner uses backward chaining to select skills: select_skill(goal) = argmax{σ: postcond(σ)⊇goal} V(σ) — find the skill whose postconditions cover the goal, weighted by the skill's value estimate V.

2-Phase Credit Assignment — PSN's crown jewel

When a composed skill chain fails, which skill is at fault? PSN's answer is a two-phase process that's structurally analogous to backpropagation in neural networks — but operates on symbolic programs instead of tensors.

Phase I walks the execution trace top-down, decomposing failures into root causes. Phase II applies fixes bottom-up, leaves first, ensuring child updates are settled before parents re-compose.

The maturity gate is critical: P(update|σ) = 1 − sigmoid(V/threshold) + ε. A skill with a high value estimate V — meaning it reliably succeeds — is unlikely to be updated. Immature skills with low V stay plastic. The ε ensures even mature skills can be corrected if they're genuinely broken.

5 Canonical Refactor Patterns

PSN performs structural refactoring via five graph rewrites. These shrink the library over time — the opposite of what you'd expect from an additive system.

| Pattern | Trigger | Action |

|---|---|---|

| Parametric Coverage | Specialised variant duplicates a general skill | Replace with parameterised wrapper |

| Behavioral Coverage | Skill reimplements existing subskill behavior | Replace body with call to existing skill |

| Sibling Specialisations | Multiple skills share structure, differ in detail | Extract abstract parent + specialised overrides |

| Common Subskill Extraction | Repeated code block across multiple skills | Extract shared subskill, update callers |

| Duplication Removal | Two skills are functionally identical | Merge into one, redirect all references |

Knowledge Activation's Continuation Graphs

Where PSN uses top-down planning, Knowledge Activation takes a decentralised approach. Each skill has three typed continuation edges — success, failure, escalation — and the agent traverses locally with no central orchestrator. Topology validation at commit-time catches cycles, uniqueness violations, and dangling references before deployment. It's less powerful than PSN's planner but dramatically simpler to reason about at scale.

SkillCraft found that flat libraries outperform hierarchical ones — errors propagate and compound through nested compositions.[SC] But PSN demonstrates that hierarchy does work when paired with 2-phase credit assignment. The reconciliation is clear: hierarchy needs a feedback mechanism. Without credit assignment, a failure in a leaf skill silently corrupts the entire chain. With it, blame is localised and repair is surgical.

STEPS Compositional Threshold

Training on binary skill combinations (k=2) triggers a sharp performance jump: WB-Score goes from −22.75 to +23.91. But k>4 degrades performance. This suggests a fundamental ceiling on how many skills can be effectively composed in a single operation — and it's lower than anyone expected.

How Conflicts Are Resolved

When composed skills disagree — or when multiple skills claim authority over the same goal — the system needs a resolution hierarchy. Across the literature, we see the same four-layer pattern emerge independently in multiple frameworks:

Most conflicts resolve at Layer 1 (milliseconds, deterministic). Each successive layer is slower but handles higher-ambiguity cases. Layer 4 is reserved for decisions involving stakes, novelty, or ethical judgment that no automated system should make alone.

Symbolic-MoE[SMoE] operates at Layer 3: it builds skill profiles from validation performance, selects top-k experts per query instance, and uses a task-specific aggregator LLM to synthesise conflicting outputs in a single round. PSN adds its own constraint at Layer 1: a rolling buffer of 5 recent proposals enforces consistency — contradictory edits to the same skill within the window are rejected before they enter the system.

Dependency Management — the agent.lock proposal

As skill libraries grow, the problem shifts from "how do I compose skills?" to "how do I know which version of which skill I'm composing with?" The npm parallel is no longer a metaphor — it's a direct analogy.

[skills.web_search] name = "web_search" version = "1.3.2" sha256 = "a7f3c9e2d1b4..." publisher = "acme-corp" review_status = "audited" [skills.code_review] name = "code_review" version = "0.8.1" sha256 = "e5d2a1f8b3c6..." publisher = "internal" review_status = "pending"

The agent.lock proposal[GL] pins skills by name + version + SHA-256 hash + publisher + review status, enforceable via CI. pixi-skills ships skills as conda packages with run_constraints (e.g., polars>=1.38,<2.0) and pins exact versions in pixi.lock. EvoSkill uses git branches as skill versions — each evolution creates a new branch, with the Pareto frontier tracked via git tags.

| npm | Skill Ecosystem | Status |

|---|---|---|

package.json | SKILL.md | Established |

package-lock.json | agent.lock (proposed) | Proposed |

npm install | skills add | Implemented |

npm audit | skill-audit | Emerging |

semver (^1.2.3) | Not yet standardised | Missing |

npm registry | 280K+ skills on skillsmp.com | Live |

npm unpublish | No equivalent | Missing |

26.1% of 42,447 analysed skills contain security vulnerabilities.[sec] Without agent.lock-style pinning, a supply-chain attack can silently replace a skill between invocations. The npm ecosystem learned this lesson with event-stream and ua-parser-js. The skill ecosystem hasn't learned it yet — but with 280K+ public skills and 46.3% duplication rates, the attack surface is already enormous.

"What the best systems have in common"

Across 50+ papers, ten patterns keep appearing. Not speculative — empirically validated by multiple independent teams.

Evolved skills beat human-curated skills

EvoSkills: 71.1% vs. 53.5% human-curated.[6] The gap is iteration count, not intelligence. Self-generated skills without iteration provide ≈0pp benefit (SkillsBench). But add a closed-loop evolution cycle — fail → reflect → mutate → validate — and the skills rapidly surpass human-authored ones. The key variable is iteration count: 3+ cycles typically suffice.

Description quality IS routing quality

SkillRouter found a 31–44pp accuracy drop when skill descriptions are truncated.[3] OpenAI reports negative examples reduce misfires by 20%.[5] A perfectly implemented skill with a poor description will never fire. The description is routing metadata, not documentation — it tells the system when, not how.

RL-trained routers destroy embedding similarity

Memento-Skills: 80% task success with RL-trained routing vs. 50% with BM25.[23] SkillOrchestra: +22.5% over SOTA with 700× less learning cost than Router-R1.[22] The 2026 shift: from "find the most similar skill" to "find the skill most likely to succeed at this task."

Smaller models + skills match larger models without

SkillsBench demonstrates this empirically: smaller models augmented with curated skills achieve competitive or better performance compared to larger models operating without skills.[2] AgentCPM takes it further: an 8B model with atomic skill RL matches closed-source frontier systems on DeepResearch Bench.[38]

Progressive disclosure is non-negotiable at scale

94% token reduction from three-tier progressive disclosure (HOT → WARM → COLD). Claude Code: 14,000 → 968 system tokens. Per-session cost: $3.00 → $0.32 for 100 tools. Without progressive disclosure, the tool selection cliff between 50–200 tools kills performance.[1]

Multi-agent helps parallelizable tasks, devastates sequential ones

Google Research: +80.9% on parallelizable tasks, but −39% to −70% on sequential tasks in multi-agent setups.[11] This is the most underappreciated finding. Skill routing must detect task structure before deciding delegation strategy. The answer to "should I use multi-agent?" is always "it depends on the task graph."

Production deployments validate at extreme scale

Meta REA: 2× model accuracy, 5× engineering productivity in production.[12] SAGE: 59% fewer tokens.[19] KernelEvolve: 60% GPU throughput improvement.[13] CORPGEN: 3.5× baseline with experiential learning.[16] These aren't benchmark numbers — they're production deployments.

The marketplace is exploding — and it's a security catastrophe

18.5× growth in 20 days. 40,285 skills audited. 46.3% duplicates. 9% critical-risk. >90% of popular skills fail rigorous audit.[42][44] Supply-chain poisoning via skill documentation achieves 11.6–33.5% bypass rates.[45] The npm analogy extends to npm's security nightmares.

Hierarchical skill organisation unlocks compositional reuse

SkillCraft: up to 80% token reduction from compositional skill caching.[25] SkillX auto-constructs 3-tier hierarchies from trajectories.[24] The pattern: atomic → functional → strategic. Skills compose like functions. Hierarchical organisation makes this composition efficient.

Metacognitive self-modification works — but scares everyone

HyperAgents outperforms across 4 diverse domains.[14] Constitutional Evolution beats human-designed norms by 123%.[27] These systems modify their own modification procedures. The empirical results are compelling. The formal safety guarantees are absent. Sandboxed experiments only — no one has deployed this in production.

"Improved skill" is just a hypothesis until it's measured

The evolution loop has a hole: how do you know a mutated skill is better? Without evaluation, evolution is random walk. The fitness function IS the evaluation.[48]

A skill can hurt. SkillsBench found some skills produce negative deltas. As models improve, once-essential skills become counterproductive. Boris Cherny on the Claude Code team put it directly: "Delete your claude.md and add back a little bit at a time. With every model you have to add less and less."

Skill quality is task-dependent and model-dependent. Transformative for one task, guardrail for another, harmful for a third. Without A/B evaluation against a no-skill baseline, the evolution loop has no fitness signal.

Three ingredients of a real skill evaluation

OpenHands' evaluating-skills-tutorial formalises the minimum viable evaluation. Every evaluation requires exactly three things — if any one is missing, you're not really measuring:

① BOUNDED TASK

One run, one artifact, one answer

The task must be small enough that the agent finishes in one run, a human can understand success, and failures are diagnosable. One output artifact — report.json, answers.json, result.xlsx — not a subjective assessment of vibes.

② DETERMINISTIC VERIFIER

Pass/fail with no human judgment

The verifier checks required fields exist, values match within tolerance, expected structure is present. It must say pass or fail without subjective judgment. "The answer feels good" is not a verifier. assert expected_findings ⊆ actual_findings is.

③ NO-SKILL BASELINE

Compare, don't just measure

Run the same task without the skill first. Without a baseline, you can't tell if the task is already easy, if the skill actually improved anything, or if the skill made things worse. This is the most common evaluation mistake: only testing the skill-enabled version.

Three archetypes of skill impact

OpenHands tested three tasks across five models (Claude Sonnet 4.5, Gemini 3 Pro, Gemini 3 Flash, Kimi K2, MiniMax M2.5) and discovered that skill impact falls into three distinct archetypes — each requiring a different response from the evolution loop:

The skill IS the capability

Dependency audit: 0% → 100% pass rate. 266s → 109s runtime. 53 → 22 events. Without the skill, agents improvise broken workflows. The skill encodes the actual procedure the task requires — tool selection, ordering, filtering, output format.[48]

| Condition | Pass | Runtime | Events |

|---|---|---|---|

| no-skill | 0% | 266s | 53 |

| skill | 100% | 109s | 22 |

The skill adds consistency

Financial report extraction: 90% → 100%. The task is already mostly solvable — the skill adds exact formulas, local-file instructions, and Python-for-arithmetic guidance. It's a safety net against edge-case failures, not an unlock.

| Condition | Pass | Runtime |

|---|---|---|

| no-skill | 90% | 87s |

| skill | 100% | 99s |

The skill makes things worse

Sales pivot analysis: 70% → 80% aggregate, but model-dependent. Claude Sonnet 4.5 passed no-skill but failed with the skill on cloud. The skill nudged it into a brittle workbook-construction path. "Improved" was a hypothesis — measurement disproved it.

| Condition | Pass | Notes |

|---|---|---|

| no-skill | 70% | some models excel |

| skill | 80% | some models regress |

Wiring evaluation into the evolution loop

This is where evaluation becomes the engine of evolution rather than a post-hoc check. The verifier is the fitness function. The trace is the diagnostic signal. Together, they close the loop:

The critical connection: each evaluation outcome maps to a specific evolution action. This is what transforms random mutation into directed improvement. Without this mapping, the evolution loop in Section 4 is just genetic drift. With it, skills converge:

Traces explain what verifiers can't

Pass/fail tells you whether the skill worked. Traces tell you why. In failed skill-enabled runs, traces reveal overconstraint (the skill pushed the agent into a brittle path), unnecessary exploration (the skill didn't narrow the search enough), or tool misselection (the skill recommended the wrong tool). This diagnostic signal is what makes the REFLECT step in the evolution loop actionable — without traces, mutation is blind.

Model-dependent evaluation enables model-aware routing

OpenHands found that Claude Sonnet 4.5 passed no-skill but failed with the skill on the sales pivot task. The same skill helped other models. This means evaluation must be run per-model, and the skill router must be model-aware: skill X may be essential for Haiku but counterproductive for Opus. The router needs a model×skill fitness matrix, not a single fitness score.

Skill decay is real and must be monitored continuously

As models improve, skills that were once essential become unnecessary. The evaluation loop must re-run periodically against new model versions. A skill with confidence: verified on Sonnet 4.5 may need confidence: deprecated on Sonnet 5. The lifecycle isn't just unvalidated → verified — it's a continuous re-evaluation that can demote previously trusted skills.

Practical: how to wire this today

OpenHands' evaluating-skills-tutorial provides the concrete implementation pattern. The key design decisions that make it work as an evolution engine:

# The minimum viable evaluation loop

# (adapted from OpenHands evaluating-skills-tutorial)

tasks/

my-task/

task_prompt.txt # bounded goal + output contract

expected_report.json # ground truth

input/ # local artifacts (no network)

output/ # where agent writes result

skills/

baseline/SKILL.md # current production skill

improved/SKILL.md # candidate mutation

verify.py # deterministic pass/fail

# 1. Run no-skill baseline

uv run python scripts/run_eval.py --condition no-skill

# 2. Run with skill

uv run python scripts/run_eval.py --condition improved-skill

# 3. Compare: pass/fail first, then runtime + events

uv run python scripts/compare_runs.py

# 4. If improved-skill wins → promote (↑ confidence, ↑ Q-value)

# 5. If improved-skill loses → inspect trace → mutate → re-run

# 6. If no difference → candidate for deprecation

Before calling a skill evaluation valid, check: Did I compare against no-skill? Did I keep the task fixed across conditions? Did I use a deterministic verifier? Did I measure pass/fail first? Did I look at runtime as a secondary metric? Did I inspect traces only to explain behavior, not to define success? Did I test across more than one model if making a general claim? If several answers are no, the evaluation is too weak to drive evolution.[49]

Beyond pass/fail: what 2026 research actually proposes

The OpenHands framework is the minimum viable evaluation. But the Feb–April 2026 research wave produced significantly more sophisticated approaches. Six techniques go beyond binary pass/fail in ways that directly improve evolution loop convergence:

CO-EVOLUTIONARY SURROGATE VERIFICATION

An isolated LLM generates its own test suite — no ground truth needed. EvoSkills' surrogate verifier operates in a completely separate session with no access to the skill generator's code or reasoning. It synthesises per-assertion diagnostics, provides root-cause analysis, and escalates its own test suite when the oracle reveals gaps. Ablation: removing it drops pass rate from 71.1% to 41.1% — 30pp of the total gain comes from the surrogate alone.[6]

pass@k VS pass^k

Capability and reliability tell opposite stories. pass@k = probability of ≥1 success in k trials (rises with k). pass^k = probability ALL k trials succeed (falls with k). At 75% per-trial success: pass@5 ≈ 100%, but pass^5 = 24%. Production skills need pass^k; research claims use pass@k. Conflating them is how teams ship "90% accurate" skills that fail 1-in-3 for users.[50]

CONTINUOUS EVALUATION (EvoClaw)

80% on isolated tasks → 38% on continuous tasks. EvoClaw evaluates agents on sequences of dependent tasks where early mistakes compound downstream. The metric decomposes into Recall (features implemented) and Precision (code not broken). Finding: Recall grows linearly but Precision saturates across all 15 agent-model configurations — a universal ceiling.[51]

PROCESS REWARD MODELS

Step-level signals, not just task-level outcomes. AgentPRM redefines process reward as Promise (proximity to goal) + Progress (marginal contribution of each action). Uses TD-estimation + GAE to generate labels without human annotation. Result: 8× more compute-efficient than task-level-only evaluation for equivalent quality.[52]

ABILITY DEMAND PROFILING (ADeLe)

Predict success with ~90% accuracy before running. Microsoft's ADeLe (Nature, April 2026) maps 18 cognitive ability dimensions across 16,108 instances. Instead of "did the model pass?" it asks "which abilities does this task demand, and at what level?" — enabling targeted skill development for specific capability gaps.[43]

SKILL RETIREMENT SIGNAL

If the base model passes without the skill, delete the skill. Anthropic and Google independently converge: run evals with the skill disabled periodically. If the pass rate holds, the skill's techniques have been absorbed into the model's default behaviour. The skill isn't broken — it's unnecessary. Skill lifecycle must include deprecation, not just promotion.[53]

The CI/CD integration pattern

Every production team surveyed — Galileo, LangChain, Mindra, Braintrust, Anthropic — independently converged on the same four-tier evaluation trigger hierarchy.[54] This is not optional infrastructure — it's how skill evaluation becomes continuous rather than episodic:

| Trigger | When | Grader Type | Gate |

|---|---|---|---|

| Every commit / PR | Code or skill change | Deterministic (fast, cheap) | Block merge |

| Every merge | Integration test | Agent behaviour + trajectory | Block deploy |

| Daily / weekly | Scheduled | Full regression + model drift detection | Alert team |

| Event-driven | Telemetry anomaly, user feedback spike | Deep eval + skill retirement check | Auto-rollback |

Galileo's progressive deployment gates make this concrete: 70% task success to pass dev → 85% for staging → 95% for production. Canary at 5% of traffic, monitor 24-48h, expand if stable, auto-rollback on degradation. Mindra adds a four-layer testing pyramid: tool unit tests (every PR, <2 min) → agent behaviour tests (<10 min) → pipeline integration tests (on merge, <30 min) → end-to-end regression (nightly, <2 hrs).

Three independent sources — Google Cloud, OpenAI, and the SoK survey — flagged token efficiency as the most overlooked evaluation dimension. Two runs producing identical correct output but one burning 3× the tokens is a production bug, not a tie. The full evaluation stack: pass/fail first → runtime + event count → token budget → trace quality. Deterministic checks first (free, reproducible); LLM-as-judge only for qualitative dimensions that can't be automated.[55]

"Skills can fail, decay, and be weaponized — and all three are happening right now"

40K+ skills in 20 days is also 40K+ failure vectors. Skills can fail, actively hurt (negative transfer), rot (decay), and get weaponised (supply-chain attacks). All four are happening now.

Part 1: How Skills Fail — The Taxonomy

The MAST Taxonomy: 14 failure modes across 1,642 annotated traces

NeurIPS 2025 spotlight. The first systematic taxonomy of multi-agent skill failures. The critical finding: architecture determines failure profile, not model. MetaGPT and ChatDev running identical GPT-4o exhibit entirely different failure distributions.

MAST: 3 categories · 14 failure modes · 1,642 traces

FC1 · SYSTEM DESIGN (5)

Step repetition: 15.7% — the #1 failure mode. Unrecognised completion: 12.4%. Disobey spec: 11.8%. Plus format deviation and resource waste.

FC2 · INTER-AGENT (6)

Task derailment, info withholding, ignored input. Plus role confusion, conflicting plans, and redundant execution across agents.

FC3 · VERIFICATION (3)

Premature termination, incomplete verification, incorrect verification. The most insidious: silent corruption of downstream state.

Negative Transfer — When skills actively hurt performance

SkillsBench: 86 tasks, 7,308 trajectories. Self-generated skills: −1.8pp. Comprehensive (verbose) skills: −2.9pp. The sweet spot is narrow — 2–3 curated skills yield +18.6pp, but 4+ skills collapse to +5.9pp. Worst case: taxonomy-tree-merge at −39.3pp.[2]

Five conditions predict when adding a skill will hurt: (1) domain well-covered in pretraining, (2) documentation is exhaustive rather than concise, (3) more than 3 skills loaded simultaneously, (4) skills self-generated without iteration, (5) model already has strong task priors. When all five align, skills are strictly worse than baseline.

Skill Decay — Four drift types

Goal drift: business objectives shift. Context drift: APIs update, libraries version-bump. Reasoning drift: newer models reason differently, once-helpful hints become overconstraining. Collaboration drift: partner agents evolve their protocols. The Order Management Agent illustrates: 90.5% → 81.7% → 69.95% over 8 weeks — the skill was frozen, the environment was not.

| Skill Category | Decay Rate | Primary Driver | Half-life |

|---|---|---|---|

| API integration | Fastest | Endpoint changes, auth updates, schema drift | ~3–6 weeks |

| Infrastructure / DevOps | Fast | CLI version bumps, config format changes | ~6–10 weeks |

| Framework-specific | Moderate | Major version releases, deprecation cycles | ~3–6 months |

| Architecture patterns | Slow | Paradigm shifts, best-practice evolution | ~6–12 months |

| Generic programming | Slowest | Language semantics stable; model priors strengthen | ~12+ months |

Part 2: The Security Crisis — Deep Dive

The DDIPE Attack: supply-chain poisoning through documentation

Document-Driven Implicit Payload Execution. The attack embeds malicious code in SKILL.md documentation — not source code. Agents reproduce reference implementations as "best practice" and the payload executes. Entry barrier: a GitHub account and a SKILL.md file. 4 CVEs filed.[45]

Snyk ToxicSkills: 3,984 skills audited, 76 confirmed malicious

13.4% CRITICAL severity. 36.82% flagged with any issue. 76 deliberately weaponized. An 8-category threat taxonomy: credential theft, data exfiltration, dependency confusion, prompt injection, persistent backdoors, privilege escalation, lateral movement, and environment poisoning.

Not anonymous. zaycv: 40+ automated malicious submissions using template-based generation, credential-harvesting payloads. Aslaep123: multi-stage payloads with delayed activation. aztr0nutzs: environment-poisoning — modifying pip.conf, .npmrc, and shell profiles to redirect package installs.

Why AI skills are WORSE than traditional packages

| Surface | npm / PyPI | AI Skills |

|---|---|---|

| Default privilege | Sandboxed, explicit perms | Full agent permissions — FS, network, shell |

| Attack vector | Code only | Code + NL prompt injection in documentation |

| Persistence | Files on disk | Files + agent memory + learned behaviours |

| Detection | Static analysis catches most | Semantic disguise — only 1.6% detectable across models |

| Supply chain | Visible dep tree (lock files) | Runtime-loaded, dynamically discovered deps |

| Review gate | Code review + CI/CD + SAST | Agent auto-installs at runtime — no human in loop |

| Blast radius | Application-scoped | Cross-agent — skills propagate via collective evolution |

The fundamental asymmetry: packages contain code that tools analyse. Skills contain natural language that tools can't statically reason about.

Part 3: Defense Architecture

"Skills-for-Skills" auditing

Multi-agent auditing — security agents evaluate other agents' skills. Catches systemic risk patterns. Identifies the giant connected component of high-risk skills sharing vulnerable dependencies.[44]

Three-layer security architecture

Skill-layer sandbox + Plugin-layer isolation + Watcher-layer (out-of-process state-evolution monitoring). The Watcher kills mutations before they affect the host.[46]

Out-of-process policy enforcement

Self-evolving skills execute in a sandbox. A separate watcher monitors state evolution. Policy violations killed pre-commit. Hardware isolation via Firecracker microVMs when available.

4-tier governance framework

7 design patterns + security taxonomy. Trust & Lifecycle Governance with 4 trust tiers. ClawHavoc red-team: 1,200 malicious skills exfiltrating credentials.[47]

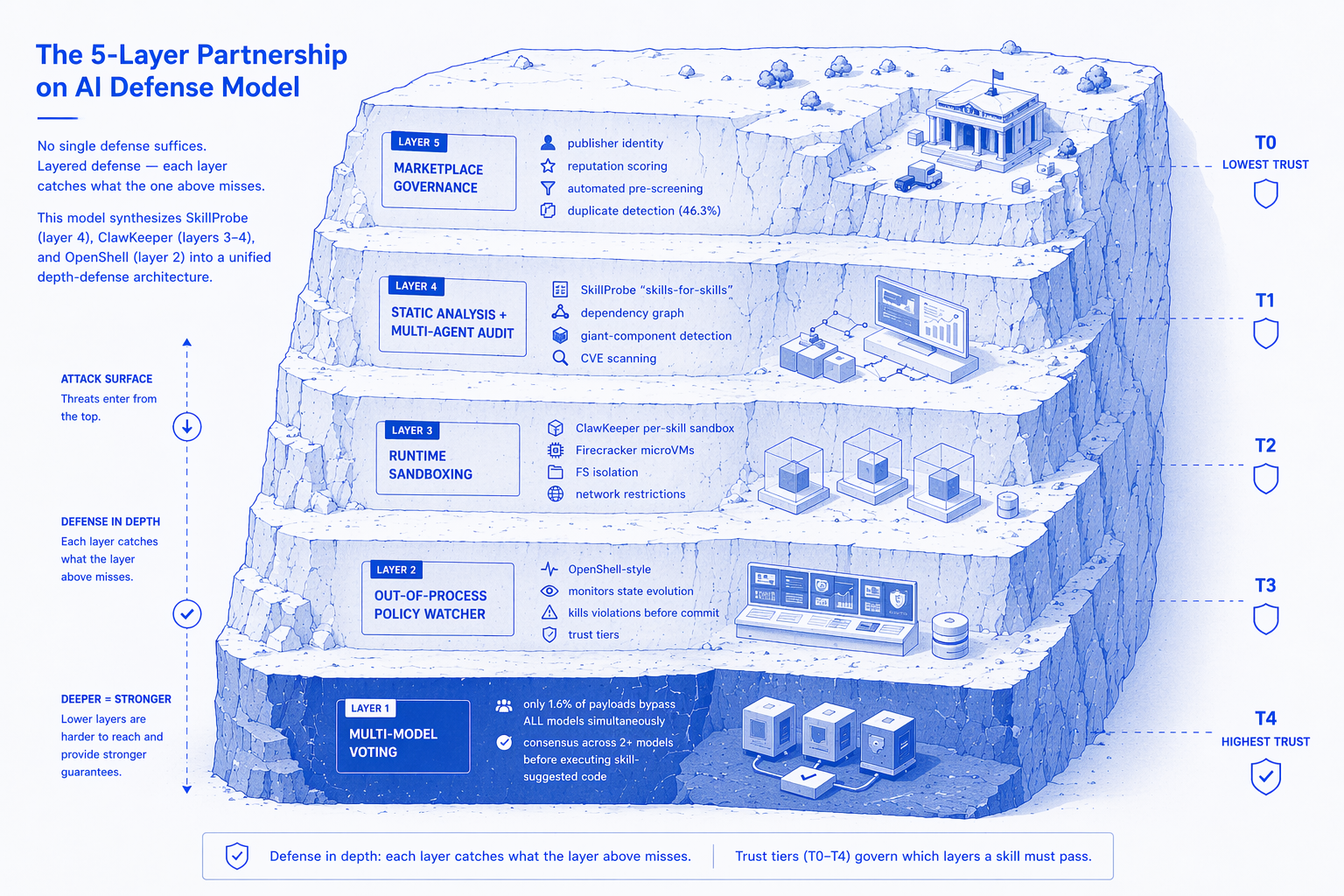

The 5-Layer Partnership on AI Defense Model

No single defense suffices. Layered defense — each layer catches what the one above misses. This model synthesises SkillProbe (layer 4), ClawKeeper (layers 3–4), and OpenShell (layer 2) into a unified depth-defense architecture.

None of this infrastructure exists in production today. The marketplace grew 18.5× in 20 days. The security tooling grew approximately 0×. We are in the npm circa 2016 moment: explosive growth, minimal governance, active adversaries. The difference: AI skills have a strictly larger attack surface than npm packages — and the blast radius is cross-agent, not just cross-application.

"Every token is either consumed or invested — the gap determines your ROI"

Skill evolution burns tokens. Every mutation cycle, every reflection step, every failed attempt. The question: which skills to evolve, when to stop, and how fast does it pay back.

The Agentic ROI Framework

The Tsinghua/Oxford Knowledge Compounding study[KC] introduces a formal ROI metric for skill investment across domains:

ROI_i = (ΔQ_i × ΔT_i) / C_i ΔQ_i = quality improvement from skill reuse in domain i ΔT_i = time savings (fewer steps, fewer tokens) C_i = total cost of skill creation + evolution

The domain ranking is striking — and counterintuitive:

| Domain | Agentic ROI | User Demand | Explanation |

|---|---|---|---|

| Coding | Highest | High | Narrow topic clusters → skills accumulate and compound |

| Scientific Research | High | Medium | Structured methodologies reuse well across papers |

| Office Automation | Medium | High | Repetitive workflows, but high format variance |

| E-commerce | Low | High | Product catalogues shift faster than skills can stabilise |

| Personal Assistance | Lowest | Highest | Diffuse topics can't accumulate reusable skill knowledge |

The domains with the highest user demand have the lowest agentic ROI. Personal assistance is what everyone wants — but its diffuse topic distribution means each interaction is essentially novel. Coding, by contrast, has concentrated topic clusters where a single skill (e.g., "write pytest fixtures") fires hundreds of times. This is the central economic tension of skill evolution: invest where compounding works, not where users ask loudest.

Concrete Token Savings

Four independent production systems quantify the returns:

The Capital Goods Reframing

The most provocative claim from the Knowledge Compounding paper: LLM tokens spent on skill evolution should be reclassified from consumables to capital goods — analogous to SFAS 86 treatment of software development costs. Four properties justify this:

Skills outlive the session

Unlike chat tokens that vanish at session end, evolved skills persist as durable artefacts — SKILL.md files, code libraries, wiki syntheses — reusable across future sessions.

Each use amortises the cost

A skill invoked N times returns N × savings. The marginal cost of the 100th invocation is zero; the marginal value is identical to the first.

Skills transfer across model generations

SKILL.md is model-agnostic text. Skills authored for GPT-4o run on Claude, Gemini, or next-generation models. The investment doesn't depreciate with model upgrades.

Skills appreciate with use

Evolution cycles improve skills over time. Unlike physical capital, skill artefacts gain value through iteration — the opposite of depreciation.

The compounding dynamics follow a learning curve:

H(i+1) = H(i) + α × (1 - H(i)) × p(i) H(i) = knowledge harvest at step i α = 0.18 (empirically fitted learning rate) p(i) = probability of finding new knowledge at step i

"Compounding never wins on raw token count"

In a 4-query experiment, Chunk-RAG used 13.6K tokens total. Compounding used 47K — 3.4× more. But at day 30, the compounding system had produced ~270 persistent wiki pages. Chunk-RAG had produced nothing persistent. The tokens were consumed, not invested. Capital accounting changes the entire calculus: compounding's "cost" is actually an asset on the balance sheet.

The Marketplace at Scale

The skill marketplace ecosystem provides the clearest signal that skill evolution is not theoretical — it's a live economy with supply curves, demand curves, and market failures.[ASE]

The Cost of Not Evolving

The asymmetry is stark: the cost of skill evolution is finite and bounded; the cost of stasis compounds indefinitely.

Static skills provide ≈0pp benefit

SkillsBench demonstrates that self-generated skills without iteration provide approximately zero improvement over baseline. The act of writing a skill isn't what creates value — the evolution loop is.[SB]

10.7% of skills become obsolete

SkillReducer analysis of real-world skill repositories: the original trigger description fails to activate 10.7% of skills over time. Without active maintenance, over one-tenth of your skill library is dead weight consuming retrieval bandwidth.

Without maintenance: 4.5× bloat, 8.9× utilisation decay

AutoRefine quantifies the decay: unmanaged skill repositories grow 4.5× in size while utilisation rate degrades 8.9× — skills accumulate but stop firing, crowding the retrieval space and poisoning the router's selection accuracy.[AR]

Context rot cliff at ~60% fill

When skill context consumes >60% of the available context window, agent performance degrades non-linearly. The model can no longer attend to the task itself — it's drowning in stale skill definitions. Progressive disclosure and active pruning aren't optimisations; they're survival mechanisms.

AutoRefine's scoring formula captures the tradeoff: Score = effectiveness × frequency × precision. Prune the bottom 20th percentile at exponentially spaced intervals. The result: a compact, high-utilisation skill library that stays healthy over time — rather than an ever-growing graveyard of unused artefacts.

The Breakeven Calculation

Skill evolution costs are front-loaded. EvoSkills achieves ~71% pass rate in 3+ iterations, where each iteration equals one LLM call. Assume 3 evolution calls at ~1,000 tokens each = 3,000 tokens invested. If each subsequent invocation saves 59% of an average 800-token call (~470 tokens saved), the payback schedule is fast:

THE INVESTMENT CASE

~7 invocations to breakeven

3 evolution iterations × ~1K tokens = 3K tokens invested. Each invocation saves ~472 tokens (59% of 800). Breakeven at invocation 7. By invocation 30, you've netted ~11K tokens in pure savings — a 3.7× return.

THE STASIS COST

Unlimited downside

Without evolution: 10.7% skill obsolescence, 4.5× repository bloat, 8.9× utilisation decay. The non-evolving system doesn't stay constant — it actively degrades. Context rot, router poisoning, and retrieval dilution compound indefinitely.

The economics are unambiguous. Skill evolution is not a research curiosity — it's an arbitrage. The only question is which skills sit in the high-ROI quadrant (narrow domain, high reuse frequency, stable topic distribution) and which ones don't warrant the investment. Coding, infrastructure automation, and structured data workflows are the obvious first movers. Personal assistance, creative writing, and open-ended research are last — not because they're unimportant, but because their diffuse topic distributions can't accumulate reusable skill capital fast enough to justify the evolution cost.

What we'd build if we started from scratch

Every finding in this survey points in the same direction. Synthesising across all 50+ papers, all lab reports, and all production deployments, here is an opinionated architecture for skill management in multi-agent systems. Not a compromise — a synthesis.

The architecture has six layers. Each addresses a specific finding from the research. Here's the rationale:

Design principles — what the research tells us to build

PRINCIPLE 1

Skills are the npm of agents. Versioned, composable, publishable, installable. The SKILL.md format is the package.json. Progressive disclosure is lazy loading. The marketplace is npm. The security crisis is npm audit.

PRINCIPLE 2

Evolution must be closed-loop. Static skills decay. Self-generated skills without iteration are worthless. But add the execute → fail → reflect → mutate → validate loop and evolved skills surpass human-curated in 3+ iterations. Evolution is non-optional.

PRINCIPLE 3

The router is the brain, not the model. An RL-trained behavioural router with full-text retrieval, multi-stage reranking, and task-graph detection. Not embedding similarity — task-success prediction. The router determines 80% vs. 50% task success.

PRINCIPLE 4

Security is Layer 0, not Layer N. Out-of-process watchers. Trust tiers. Unit-test gates on every mutation. No skill enters production without passing validation — just as no npm package should ship without CI. The 90%+ audit failure rate is a five-alarm fire.

PRINCIPLE 5

Hierarchy is free performance. Atomic → functional → strategic. Auto-constructed from trajectories. Compositional caching yields 80% token reduction. The cost of building the hierarchy is paid once; the savings compound on every subsequent invocation.

PRINCIPLE 6

Detect task structure before delegating. Multi-agent helps parallelizable tasks (+80.9%) and devastates sequential ones (−39% to −70%). The router must inspect the task dependency graph before deciding whether to delegate to sub-agents or handle in the main context.

PRINCIPLE 7

Evaluation is the fitness function. Every mutation must pass a deterministic verifier against a no-skill baseline before promotion. Traces provide the diagnostic signal for targeted mutation. Skills are model-dependent — the fitness matrix is model×skill, not scalar. Continuously re-evaluate against new model versions to detect skill decay.[48]

By the end of 2026, the dominant agent architecture will look like a compiler pipeline: parse the task into a dependency graph, route each subgraph to the optimal skill + model + agent type combination, execute in isolated contexts with progressive disclosure, evaluate with deterministic verifiers against no-skill baselines, and evolve the skill library from trace-informed mutation with per-model fitness tracking. The agent that does this best won't be the one with the biggest model — it'll be the one with the best skill management system.